The UFO and UAP news cycle is loud on purpose: viral cockpit clips, partisan sound bites, and daily promises of “alien disclosure” that never quite arrive. What most of those arguments actually lean on is older than the internet, and usually misread: Project Blue Book’s commonly cited final tally of 12,618 total reports, with 701 classified as “unidentified” (National Archives summary of Project Blue Book records). Those two numbers are why Blue Book still feels like a file that never really closed, even though the program did.

Your real decision is practical, not philosophical: do you treat today’s UFO news as signal or noise, and do you treat Blue Book as proof of a government UFO cover-up or proof that most reports reduce to mundane explanations once they’re investigated. “UFO” itself bakes in ambiguity, because it describes what a witness could not identify in the moment, not what the object “was.” “UAP” is the modern government term for the same problem set, and it arrives with new offices, new reporting pipelines, and a bigger audience that often confuses attention with evidence.

The central tension sits inside one word: unidentified. In Blue Book, “unidentified” and “unexplained” function as case-disposition categories, not conclusions about origin. That procedural meaning is easy to miss because “unidentified” sounds like an answer, when it’s actually an administrative label that shows up across government writing to mean “not determined” in context, from “unidentified” participants to “unidentified” incidents. Blue Book’s 701 unresolved cases are the residue that fuels decades of speculation, but the record’s language forces a stricter reading: a case can remain unresolved because the information in the file never supports a confident identification, not because it proves non-human intelligence.

That disciplined reading matters now because Blue Book still anchors modern disclosure arguments, and the modern reporting ecosystem keeps expanding. The Department of Defense’s All-domain Anomaly Resolution Office (AARO) published its Report on the Historical Record of U.S. Government Involvement with Unidentified Anomalous Phenomena, Volume 1 in 2024 (AARO Historical Record Report, Volume 1), and AARO and the Department of Defense published a consolidated annual UAP report covering May 1, 2023 to June 1, 2024 (FY24 Consolidated Annual Report on UAP). The rest of this piece sticks to what the Air Force recorded, how dispositions worked, why 701 stayed unresolved, and what the public record actually supports when people claim “disclosure,” without asserting aliens.

To read those headline numbers correctly, you have to start with the institutional problem Blue Book was built to handle: sustained reporting that demanded triage, investigation, and paperwork discipline.

Why Blue Book was created

Project Blue Book existed because unidentified reports had become a management problem, not a campfire story. The Air Force needed a standing mechanism to absorb, triage, and disposition incoming sightings in a way that reduced uncertainty at scale: operational uncertainty for commanders, scientific uncertainty for analysts, and public uncertainty as rumors and headlines accelerated faster than official answers.

Blue Book was not the first attempt. Earlier Air Force efforts, Project Sign and Project Grudge, preceded it and exposed the same friction point: ad hoc studies do not keep pace with sustained reporting. Project Blue Book launched in March 1952 and became the long-running program, commonly framed as 1952 to 1969, precisely because the reporting volume and attention did not behave like a short-lived spike.

The mission tension sat in plain view. First, there was threat assessment: any unknown in controlled airspace forces the question of hostile capability, misidentification risk, and radar and communications reliability. Second, there was scientific and technical curiosity: the Air Force still had to sort sensor artifacts from atmospheric effects, aircraft misperceptions, and genuinely anomalous observations, because each category teaches something different about systems and environments. Third, there was public messaging pressure: unexplained reports create demand for official comment, and silence gets interpreted as either incompetence or concealment.

Note: direct quotations of Blue Book’s official mission or verbatim objectives should be verified against primary Air Force documentation in the original files before being presented as exact wording.

In practice, those pressures pulled in different directions. Aggressive security framing invites escalation and secrecy; aggressive scientific framing invites time, instrumentation, and tolerance for unresolved cases; aggressive public reassurance pushes toward quick identifications and clean narratives. Blue Book sat in the middle, and that compromise shaped what it investigated hard, what it closed quickly, and what it treated as an administrative problem to be processed.

The first major external shaping force arrived early. The Robertson Panel, a CIA-convened panel meeting January 14 to 18, 1953 at the direction of the Director of Central Intelligence, evaluated the UFO issue as a national-security information problem, not as a standalone mystery. Its report concluded that about 90 percent of UFO sightings could be readily identified with meteorological, astronomical, or natural causes (Robertson Panel report, CIA reading room).

That conclusion did more than rank likely explanations. It set an expectation for institutional posture: treat most reports as solvable through ordinary causes, and treat the residual uncertainty as something to be contained so it does not degrade readiness or public confidence. Blue Book’s continued existence can therefore signal two truths at once: it reflects genuine inquiry into reports the military could not ignore, and it functions as institutional pressure relief by giving the public and the chain of command a visible outlet for answers. That dual role is exactly why Blue Book sits at the center of government UFO cover-up narratives.

Read Blue Book dispositions with that ambiguity in mind, because the same case file can record both an investigative impulse and an organizational need to keep uncertainty from spreading through operations and paperwork.

Those competing pressures didn’t just shape what Blue Book was for; they shaped how investigations were conducted and, ultimately, which cases could be closed on the record.

How cases were investigated

Blue Book’s results mostly tracked the quality of information it could collect. Cases got “solved” when the underlying facts were checkable against independent records; they stayed unresolved when the report collapsed into untestable detail, missing logs, and conflicting recollections.

The workflow was investigative, not mystical: turn a sighting narrative into a timeline, then test it against external data streams that were hard to argue with.

- Intake reports from the public and from military channels, capturing time, place, direction of view, duration, apparent motion, and any sensor involvement (radar, photos, film).

- Interview witnesses to reconstruct a single timeline, then lock down specifics that can be checked later: azimuth, elevation, and the exact start and stop times.

- Cross-check astronomy against the reported bearing and time to confirm or eliminate bright stars and planets, and to assess whether a meteor fireball window fits the duration and movement described.

- Cross-check weather for visibility, cloud layers, winds aloft, and conditions associated with optical effects; temperature inversions mattered because they can bend light and distort apparent position and motion.

- Cross-check known aerial activity against the time and location: aircraft routes and local flight activity, plus balloon operations when launches and drift could be traced to the observation window.

- Correlate radar where applicable, treating it as supporting data only if time stamps, range, and azimuth could be matched to the visual report and to known traffic.

- Handle photo and film carefully when available, prioritizing original negatives, first-generation prints, and provenance; analysis focused on whether the media contained measurable cues (focus, exposure, reference objects) or only ambiguous blobs.

- Dispose the case administratively by category based on what the file could support, not on what the story suggested.

This is why the “common explanations” of the era were not a grab bag. Each mapped to a specific verification path: stars/planets to sky position at a timestamp, meteors to brief duration and directionality, balloons to launch records and wind drift, aircraft to flight activity and navigation lighting, temperature inversions to weather structure, and hoaxes to internal inconsistencies or physical impossibilities that show up once the timeline and media chain are examined.

Most real-world reports fail at the same friction points: timing precision, geometry, and corroboration. A sighting at “around 9” with no compass bearing cannot be cleanly matched to sky charts, flight activity, or weather observations. Witnesses also compress time, overestimate size, and misread distance, which turns speed and altitude estimates into numerically impressive but meaningless outputs.

Unresolved cases often reflect insufficient or poor-quality data: no second witness from a different vantage point, low-detail descriptions, inconsistent accounts across interviews, missing sensor logs, or radar reports that cannot be time-aligned to the visual event. That evidentiary shortage is not proof of an extraordinary origin; it is a documentation failure that prevents elimination of ordinary explanations.

Blue Book’s end state was a fileable outcome: a case was categorized according to evidentiary sufficiency. When cross-checks pinned the timeline to verifiable external records, an explanation could be assigned with confidence. When the same cross-checks could not be run, or returned ambiguous results because the inputs were too soft, the case stayed unresolved because the record could not carry a stronger conclusion.

Modern UAP reporting, including AARO, emphasizes standardized data collection: consistent fields, repeatable intake, and preserving sensor outputs with time synchronization. The goal is not to mimic Blue Book’s process one-for-one, but to reduce the classic failure modes that kept older cases open: unclear timing, missing logs, and reports that cannot be independently tested.

That workflow explains why a fixed number like 701 can be simultaneously real in the paperwork and limited in what it proves: it reflects where the process ran out of defensible inputs.

The meaning of 701 unexplained

“701 unexplained” is not a discovery of non-human intelligence. It is the size of the evidence residue Project Blue Book could not close with the information available, recorded as an investigative outcome rather than a conclusion about origin.

In the commonly cited Blue Book summary figures, investigators logged 12,618 reports and classified 701 as “unidentified” (USAF fact sheet on Project Blue Book). People often compress that into “about 6 percent,” but that percentage is a shorthand summary, not a precision metric, and it should never be treated like a calibrated measurement of anomaly.

That distinction is where modern arguments usually break: people read “unidentified” as a hint of what something was, when the paperwork is closer to “not identified from what we can responsibly validate.” In an evidence system, that gap matters because it describes the limits of the record, not the nature of the sky.

Unresolved cases cluster in three failure modes that reliably produce an “unidentified” outcome: (1) insufficient data, (2) conflicting data, and (3) anomalous-but-nonconclusive observations. The label is often the product of those constraints, not a hidden verdict.

1) Insufficient data. Cases stall when key fields are missing or unstable: no exact time, no azimuth or elevation, no duration beyond “seconds,” no weather context, no usable description of angular size or motion. If a report cannot be reconstructed into a testable geometry, identification becomes guesswork, so the file stays open-ended.

2) Conflicting data. Contradictions inside the same case can be fatal to a clean identification: two witnesses disagree on distance by orders of magnitude, a direction of travel flips between statements, or a “hover” description conflicts with timing that implies a normal aircraft transit. When the conflict cannot be reconciled to a single physical scenario, “unidentified” becomes the honest disposition.

3) Anomalous-but-nonconclusive observations. Some reports contain features that read as unusual yet still fail the documentation threshold needed to pin down a cause: an apparent acceleration without a reference frame, a close-range claim without corroborating measurements, or a radar-visual claim (radar return plus visual report) where the records needed to validate the track are absent or incomplete. The observation can be suggestive and still not be dispositive.

Patterns frequently discussed around harder cases include multiple witnesses, radar-visual narratives, and close-range claims. Treat those as recurring talking points, not as quantified findings, unless you can point to the original tables and the specific definition used for each attribute.

Disclosure advocates cite “701” because it functions as an officially recorded remainder: even after investigation, a non-trivial number of reports were not closed with an identification. Skeptics cite the explained majority because the same totals show most reports did receive conventional dispositions. Both are true descriptions of the same dataset, and neither is a proof statement on its own.

Responsible interpretation follows evidence quality and documentation, not impressions. Use the 701 figure to ask disciplined questions: What minimum documentation would have prevented an unresolved disposition? Which fields were consistently missing? What chain-of-custody standards existed for radar logs, photos, and witness statements? “701 unexplained” is a prompt to demand better records and clearer criteria, because stronger documentation is what converts residue into resolution.

Note: year-by-year distributions or official percentage tables for the 701 figure are contained in the original Blue Book summary tables and case files; verify any granular breakdown or trend claim against the Project Blue Book microfilm or official summaries before republishing (National Archives Project Blue Book description).

Even so, public debate rarely runs on the median case file. It runs on a small set of incidents that became shorthand for what people think Blue Book was really doing.

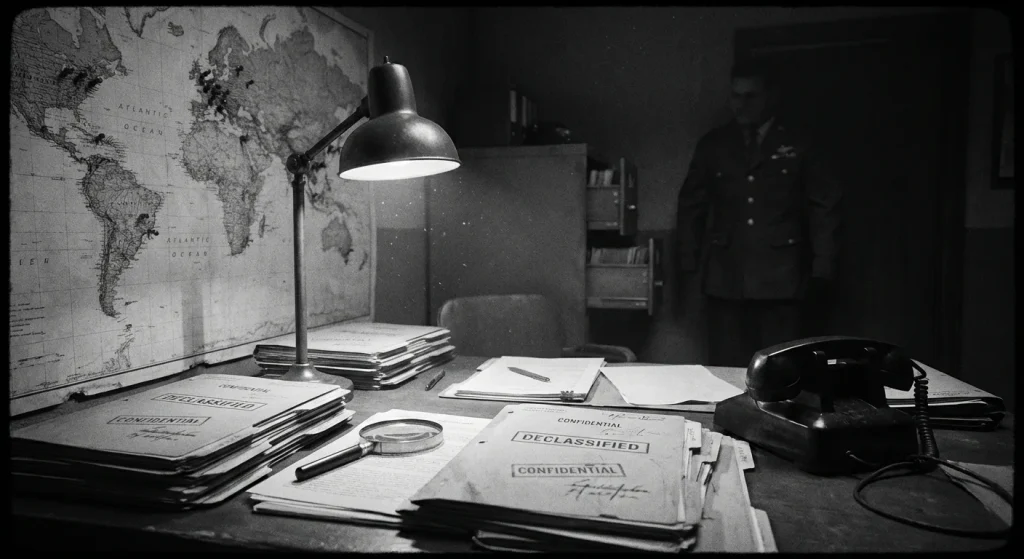

Cases that shaped public belief

A handful of high-visibility incidents, not the full Blue Book database, locked public belief into a permanent argument about competence versus cover-up. Each one fused incomplete evidence with intense attention, then tested whether the official explanation sounded like analysis or like damage control. The cases below are remembered because they created a credibility gap, not because they were statistically typical.

The July 1952 Washington, D.C. wave became a national story because it stacked different kinds of reporting on top of each other: radar tracks, pilot and crew observations, and targets described as maneuvering in restricted airspace. Multiple radars reportedly detected more than a dozen solid targets maneuvering over Washington, D.C., a detail that mattered because it reframed the problem from “someone saw a light” to “the system is seeing traffic.”

The specific witness timeline kept the pressure on. In the early morning of July 20, 1952, Capt. S.C. “Casey” Pierman reported a bright light while preparing for takeoff at Washington National Airport. On July 26, 1952 at 8:15 p.m., a National Airlines pilot and stewardess reported seeing strange objects above their plane. These weren’t anonymous callers; they were identified aviation professionals attaching names, times, and locations to what they said happened.

The complication is that radar-driven incidents create a double bind. The public hears “radar” and assumes objectivity, while controllers and investigators know radar can be fooled by propagation conditions, clutter, and interpretation differences between scopes. The official position emphasized that point: Air Force briefings leaned on temperature inversions and related radar effects to explain returns, while also treating some visual reports as misidentifications. That response reduced technical risk for the institution, but it also sounded to many people like an attempt to explain away a cluster that had already escaped into headlines. The result was durable: once a case is publicly framed as “radar versus excuse,” every later retelling inherits the conflict even when the underlying data is partial.

The Socorro incident persists because it reads like a close-range encounter rather than a distant light. On April 24, 1964, near Socorro, New Mexico, police officer Lonnie Zamora was pursuing a speeding car when he reported seeing an egg-shaped craft and two small humanoid figures. That narrative specificity is exactly what makes the case persuasive to many readers: shape, beings, distance, and a clear before-and-after sequence anchored to a working patrol officer.

The evidentiary record is also why the dispute never resolves cleanly. What Zamora claimed was direct observation of a craft and occupants. What investigators could document, at best, were conditions at the site and the consistency of the witness’s account. Contemporary reporting and later summaries point to physical trace discussion at the scene, but the core identification problem remains: the most extraordinary elements, the two small humanoid figures and the craft’s exact nature, live almost entirely inside a single witness statement. No known public record from the case provides independent photography of the occupants, and the case’s persuasive power therefore rests on credibility and corroboration, not on a fully reconstructable event.

Blue Book ultimately listed the Socorro case as “Unidentified,” a disposition that satisfied neither camp. Skeptics read it as a file that could not be solved with the available documentation. Believers read it as an admission that a close-range account from a trained observer survived scrutiny. The lasting lesson is straightforward: high-detail testimony increases public conviction, but it does not automatically increase verifiability. The more a case depends on one person’s eyes at one moment, the more it becomes a referendum on whether you trust the witness or the institution judging him.

The Michigan flap is remembered less for what was seen than for what was said about it. In 1966, a series of sightings around Hillsdale, Michigan drew press attention and public anxiety. Dr. J. Allen Hynek publicly advanced a “swamp gas” explanation for the Michigan sightings, and the reaction was immediate: ridicule, angry local pushback, and critical headlines that portrayed the explanation as a brush-off rather than an investigation.

The complication here is messaging under pressure. “Swamp gas” is not a joke term in atmospheric context, but in public language it landed as contempt. Once that framing took hold, every detail of the event became secondary to the perceived attitude behind the explanation. Even if some reports in the larger cluster fit mundane causes, the institution’s credibility took the hit, not just the specific case file.

The outcome mattered because it changed public perception of the program’s spokespeople. Hynek was criticized for the “swamp gas” explanation and later became a prominent public critic of official UFO explanations; he went on to play a leading role in civilian UFO research in later years (J. Allen Hynek biography). That arc, from official consultant to public critic, became its own evidence in the popular mind: if the explainer walks away, people assume the explanation was never the real story. Michigan is the cleanest demonstration that a technically plausible answer still fails if it is delivered in a way the audience hears as dismissive.

Across these cases, the recurring pattern is not a single mystery object; it’s a repeated credibility contest. First, documentation quality sets the ceiling: radar plots without full context, site traces without definitive linkage, and witness testimony without independent capture all leave room for competing narratives. Second, media amplification turns ambiguity into identity, because the first strong storyline becomes the one the public defends. Third, institutions are incentivized to reduce risk fast, and fast explanations invite suspicion when they sound like public relations instead of casework.

That same dynamic drives today’s viral UAP cycle and headlines: viral clips detached from full sensor context, instant conclusions built from partial frames, and official statements optimized for brevity. Read modern UAP news the way these files demand: ask what was observed, what evidence exists beyond a single channel, how complete the documentation is, and what the institution gains by the message it chooses.

Those credibility collisions didn’t disappear as the 1960s closed. They carried into the question Blue Book could never avoid indefinitely: whether the Air Force would keep running an open-ended investigative office at all.

From shutdown to disclosure politics

Project Blue Book did not end the UFO question. It ended one administrative program, and its shutdown paperwork became the template for every modern transparency argument: what did the government study, what did it conclude, and where can the public read the underlying records?

The Air Force tied Blue Book’s end to an outside scientific review it could point to as an independent basis for closure. The Condon Report, officially titled Report of the Scientific Study of Unidentified Flying Objects, was produced by the University of Colorado under contract to the United States Air Force and is associated with physicist Edward U. Condon. That “under USAF contract” detail matters because it framed the report as a formal deliverable, not an internal memo: a contractor study that could be cited in administrative decisions.

The friction is that “study completed” is not the same thing as “question resolved.” The shutdown rationale operated at the program level: if the Air Force judged that continued casework was not producing results that justified the expenditure and structure, it could rescind the regulation that created the program and close Blue Book as an office function. That decision does not prove the sky is empty or full; it proves the institution chose to stop staffing a specific investigative pipeline and to rely on a documented scientific review as the public-facing anchor.

The practical takeaway is simple: the Condon Report’s role in the record is less about winning a debate and more about how agencies justify terminating an activity. It is the citation you see again and again when people argue over whether the government “looked” in a meaningful way.

Blue Book’s afterlife is documentary, not anecdotal. The National Archives holds all Project Blue Book documentation on 94 rolls of microfilm (T1206), and that set includes both case files and administrative records (NARA Project Blue Book overview and T1206 microfilm description). That matters because case files show what investigators recorded about specific reports, while administrative records show how the program was run, what standards were applied, and how decisions were documented.

The catch is usability: microfilm is accessible, but it is not frictionless. Finding a specific incident, date range, or administrative directive can take real effort. Still, the existence of an organized archival set means arguments about Blue Book do not have to be purely rhetorical; they can be tethered to primary materials.

Modern disclosure politics constantly re-litigate Blue Book because it supplies a ready-made precedent. One side cites it as “the government looked and found nothing extraordinary.” The other cites it as a lesson in narrative management: an official program can end, yet unresolved questions and ambiguous records leave residue that fuels oversight demands. Both arguments lean on the same artifacts: contractor studies, termination rationales, and the availability of the underlying files.

The contemporary institutional analogue is reporting, not methodology. The Department of Defense’s All-domain Anomaly Resolution Office (AARO) published Historical Record Report: Volume 1 in 2024 (AARO Historical Record Report, Volume 1), and AARO and DoD published a consolidated annual report that specifies coverage from May 1, 2023 to June 1, 2024 (FY24 Consolidated Annual Report on UAP), demonstrating how recurring reports create a predictable paper trail for oversight.

When legislation enters the conversation, naming precision becomes part of the oversight fight. Recent text is cited as the “UAP Disclosure Act of 2024”, and even the section formatting (for example, a “SEC. ll02.” marker) can matter when people argue about what a proposal actually says versus what they assume it does.

For readers, the rule is unforgiving: transparency arguments are only as strong as the documents they can point to, and whether those documents are accessible and specific.

Put differently, Blue Book’s closure did not remove ambiguity; it preserved it in records, citations, and categories that still shape how people argue about evidence today.

What Blue Book still teaches us

Project Blue Book’s enduring value is not that it “proved” anything, but that it set a standard for how quickly ambiguous events harden into permanent narratives when documentation is thin. Once a label is attached, the public story often outruns the file, and the gap gets filled with certainty instead of evidence.

That discipline matters because Blue Book operated inside an institutional mission tension: national security triage, scientific evaluation, and public confidence signaling were all competing priorities, and the paperwork shows how easily those goals pull in different directions. The methods-and-data reality compounded it: case quality depended on what got reported, how fast it was recorded, and what investigators could actually verify, not on how compelling a story sounded later. Those constraints are exactly why the program’s unresolved remainder can be simultaneously important and limited: “unidentified” is a disposition category, not an origin claim. The credibility lessons from Washington D.C., Socorro, and Michigan land the same way: high-profile cases can dominate memory, but controversy around classification, interpretation, and public messaging is exactly where narratives detach from evidentiary footing.

- Contemporaneous documentation (original logs, timestamps, primary notes)

- Multi-witness consistency (independent accounts that align on specifics)

- Multi-sensor corroboration (radar, video, telemetry, ATC data)

- Chain of custody for media (who captured it, stored it, transferred it)

- Competing hypotheses (conventional explanations tested, not waived)

Credible “meaningful disclosure” looks like better data capture at the point of observation, better transparency about what was collected and what was withheld, and a clean separation between disposition categories and origin claims. Today’s reporting and oversight, including modern official UAP reporting and congressional attention, raises expectations for documentation and auditing, but it does not retroactively upgrade Blue Book-era evidence. Treat the next viral UAP headline like an evidence problem: if it cannot clear the checklist, it is not disclosure, it is a story looking for paperwork.

Frequently Asked Questions

-

What was Project Blue Book and when did it run?

Project Blue Book was a U.S. Air Force program created to absorb, triage, investigate, and disposition UFO reports at scale. It launched in March 1952 and is commonly framed as running from 1952 to 1969.

-

How many UFO reports did Project Blue Book investigate and how many were left unidentified?

Blue Book logged 12,618 total reports and classified 701 as “unidentified.” Those 701 are case-disposition outcomes, not conclusions about origin.

-

Does Project Blue Book’s “701 unexplained” number prove non-human intelligence or aliens?

No-“unidentified” and “unexplained” functioned as administrative case categories meaning the available record could not support a confident identification. The article states the 701 figure reflects an evidence residue, not a finding of non-human intelligence.

-

How did Project Blue Book investigate UFO sightings?

Investigators turned reports into timelines and cross-checked them against external data like astronomy, weather (including temperature inversions), known aircraft/balloon activity, radar correlation, and photo/film provenance. Cases were then disposed by category based on what the file could support.

-

Why did some Project Blue Book cases stay unresolved?

Unresolved cases clustered into three failure modes: insufficient data, conflicting data, and anomalous-but-nonconclusive observations. Common gaps included missing exact times, bearings, corroboration, or sensor logs that prevented clean cross-checks.

-

Where can you access the original Project Blue Book records today?

The National Archives holds all Project Blue Book documentation on 94 rolls of microfilm (T1206). This includes both case files and administrative records showing how the program was run and how dispositions were recorded.

-

What should you look for to judge whether a modern UAP video or headline is signal or noise?

Use an evidence checklist: contemporaneous logs and timestamps, multi-witness consistency, multi-sensor corroboration (radar/video/telemetry/ATC), chain of custody for media, and conventional hypotheses tested. The article argues that if a claim cannot clear those documentation standards, it is not “disclosure,” just a story without paperwork.