France set a concrete transparency benchmark in March 2007: a state-linked body began making its UFO case-file archives available online to the public. CNES/GEIPAN announced the public release in March 2007, and CNES described the release as involving more than 100,000 official documents. You can read a dozen UAP disclosure headlines and still never touch a primary document; France’s 2007 move shows what it looks like when a government-adjacent program actually puts paperwork where the public can inspect it.

The modern UFO disclosure news cycle is loud, politicized, and repetitive, and it keeps rewarding claims over receipts. If you want something verifiable, “someone said something in a hearing” is not a benchmark.

The reason the French release still matters is that it came from an established, institutional process rather than a one-off leak or a personality-driven narrative. CNES describes GEIPAN as a group created in 1977 to collect, analyze, and archive information on unidentified aerospace phenomena, which anchors the release in a long-running mandate instead of a short-term media moment.

The friction is obvious once you look at what “transparency” really buys you. Public access to investigative files is a measurable act of openness, but it does not automatically deliver the outcome many people are actually chasing: definitive “alien disclosure” or confirmation of non-human intelligence. The news cycle often blurs that distinction, because “documents released” and “extraordinary conclusion proven” generate very different emotions and very different clicks.

One limitation should be stated plainly: while the archives began going online in March 2007, the specific day in March is not established in the provided sources. That gap is part of the larger lesson here: serious disclosure standards are built on what can be pinned to records, not what can be repeated confidently.

This article explains what France released, what’s in it, what it does not prove, and the practical yardstick it sets for judging today’s disclosure expectations: prioritize primary case files and a documented investigative process over rumor.

What opened in 2007 and why

What “opening the archives” meant in practice was simple and consequential: France put primary case material online in a public-facing system, beginning in March 2007. That CNES/GEIPAN announcement framed the move as an institutional decision to make an existing body of reports, analyses, and archival records accessible through an official channel.

A source of tension is that “opened” can sound like a dramatic proclamation about conclusions. The archive release does not function that way. It functions like a public records gateway: an official pathway to read what the state-linked program collected and how it organized that material.

Ownership matters because it tells you whether you are looking at an institutional product or a side project. The archive sits under CNES (France’s national space agency), and the operating group responsible for managing the material is GEIPAN. The CNES project page for GEIPAN describes its role and institutional placement within the agency as a CNES project, and the GEIPAN site explains its mission, methods, and results in detail. GEIPAN is structured as a public-facing unit whose job is to handle reports and make information available to the public, which is precisely why a web-published archive fits its mandate rather than contradicting it.

That also clarifies what the archive represents: it is not “someone in government posted files.” It is CNES, through GEIPAN, publishing case records as part of an ongoing program.

The 2007 archive release came out of a long-running institutional track, not a newly invented initiative. The study group GEPAN was created in 1977 within CNES to study what it framed as unidentified aerospace phenomena. Over time, the organization changed names from GEPAN to SEPRA and later to GEIPAN, while remaining situated under CNES as the parent body.

The nuance is that name changes often get misread as discontinuity, as if the program “stopped” and then “restarted.” At the functional level, the through-line is continuity: a CNES-linked structure tasked with taking in reports, working them, keeping records, and communicating outward. The 2007 decision to publish archives online makes sense as an extension of that continuity, because a program designed to collect and archive is structurally capable of publishing those archives.

GEIPAN’s stated mission framing is operational rather than sensational: collecting reports, analyzing and investigating them, archiving the resulting material, and informing the public. That combination matters because it sets expectations for what a reader encounters. You are not reading a marketing narrative or an advocacy brief; you are reading the output of a system built to process incoming observations and preserve the record in a usable form.

The complication is that “informing the public” can be misunderstood as “declaring answers.” In this context, it is better read as a public-service commitment: make the record visible, describe how the program handles reports, and let readers see the official vocabulary and structure applied to real cases.

France’s vocabulary choice is not cosmetic; it changes how the archive reads. GEIPAN replaces “UFO” with PAN (Phénomènes Aérospatiaux Non-identifiés), which operationalizes the subject as an aerospace problem statement instead of a pop-culture label. In the same direction, UAP (Unidentified Aerial Phenomena) functions as the modern umbrella term that signals “unidentified” as a status in an investigation, not a conclusion about origin.

The practical effect is interpretive discipline. “PAN/UAP” nudges the reader toward casework: observation, data handling, classification, and archiving. “UFO” pulls many readers toward folklore and predetermined narratives. The files did not change in 2007; the framing that governs how an institution presents them did.

“Opened” means you have an official, CNES-linked pathway to primary records managed by GEIPAN, published online beginning in March 2007, using a PAN/UAP vocabulary that treats reports as aerospace anomalies to be documented and worked. It is a transparency mechanism: public access to the case record and the state-adjacent language used to describe it, not an “alien disclosure” proclamation.

Inside the 100,000 pages

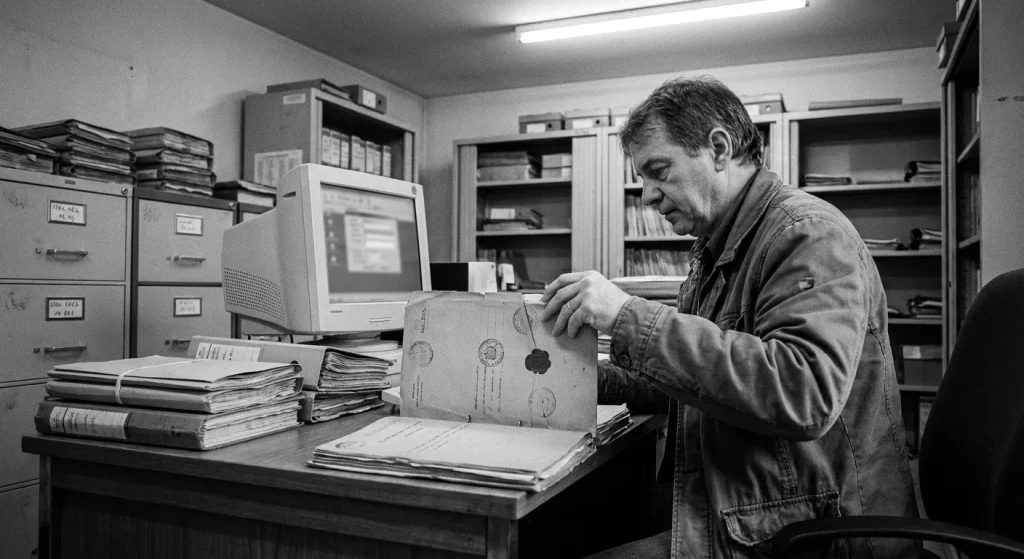

That institutional setup matters most once you start reading the files themselves. The archive earns its keep because it shows the paperwork behind each report: what was claimed, what was checked, and what the investigators could or could not close. You are not reading a single “official conclusion” written years later; you are reading case files that preserve observation details, follow-ups, and the evidentiary gaps that shape outcomes.

That unevenness is apparent once you open a few dossiers: some files are rich with attachments and timelines, others are thin because the report arrived late, the witness had limited recall, or there was nothing to corroborate. That unevenness is exactly why the archive is valuable, and why its classification system exists.

A typical dossier is built to answer practical questions investigators always need: Where was the observer, what did they see, how long did it last, what direction did it move, and what else in the environment could explain it. The materials vary by case, but the recurring document types each add a specific kind of leverage.

- Witness reports and questionnaires: These anchor the timeline (start/end time, duration), the observer’s vantage (location, orientation, elevation), and the description language the witness chose before it gets “normalized” by anyone else. They also expose uncertainty directly: distance estimates, size comparisons, and whether the witness lost sight behind terrain or buildings.

- Police or gendarmerie notes: When a report passes through law enforcement, you often get a standardized record of identities, locations, and the initial narrative, plus any immediate checks performed locally. These notes matter because they time-stamp the first account and sometimes document whether other calls came in from the same area.

- Sketches and diagrams: A drawing can do what paragraphs cannot: lock in angles, relative motion, and the witness’s implied geometry. In practice, sketches often clarify whether the report is compatible with a distant light, an aircraft track, a reentry, or a nearby object with parallax.

- Photos (and occasionally video stills): Images rarely “solve” a case on their own, but they can preserve light color, blinking patterns, and the presence of reference points on the horizon. They also reveal the hard limits of the data, because many night images saturate and erase context.

- Investigation summaries: These are the connective tissue. A summary typically compiles the witness account, lists checks performed (astronomical objects, aircraft activity, local events), and states why the case was closed as identified, left as insufficient, or kept as unidentified.

- Occasional aviation, air-defense, or radar references: Some files include references to air traffic context or other aeronautical information when it was available to investigators. The key word is “when”: these elements appear unevenly, and their absence is not, by itself, evidentiary.

The operational takeaway is simple: you are meant to read across these materials, not just the final label. A one-line conclusion is only as strong as the timeline, vantage, and environmental context beneath it.

GEIPAN classifies UAP, called PAN in French, into categories A/B/C/D forming a scale from perfectly known to unidentified. The point of this PAN classification is to prevent “all sightings are equal” thinking: it sorts cases by explanatory status and evidentiary sufficiency so investigators and the public can distinguish between an identified stimulus, an uncertain report, and a remaining unknown. GEIPAN documentation lays out these category definitions and classification logic.

That sorting also admits an uncomfortable truth: evidence quality drives outcomes. A case can be “unidentified” because the description is consistent but the data are too thin to tie it to a known cause, while another case can be “identified” because a single decisive check matches the witness timeline precisely. Classification exists to reflect that reality in a standardized way.

Since 2008 GEIPAN uses a more detailed PAN classification (A/B/C/D1/D2) based on two criteria: “weirdness” and “consistency.” In practice, those criteria separate cases that are merely unusual-looking from cases where the testimony hangs together under scrutiny. The refinement matters because it makes the “D” bucket less monolithic: it distinguishes reports that are strange but poorly supported from those that remain strange after the available checks. See the GEIPAN methods and documentation for details on classification and methodology.

GEIPAN’s public reporting is blunt about the workload reality. GEIPAN statistics show that a large share of cases are resolved: the published figures indicate that 63.2% of cases classified A and B are explained by misidentification or perception mistakes. Operationally, that means the archive is dominated by resolvable problems: distant lights, normal aeronautical activity, astronomical objects, and human perception under poor viewing conditions. The system is built to close those efficiently and document why they close.

It also signals where effort concentrates. Around 10% of GEIPAN cases led to on-site investigations; GEIPAN describes the use of field investigations when desk checks are insufficient in its methods and case notes. Field work is expensive and slow, so that percentage effectively marks the threshold where desk-based checks were not enough, or where physical context (terrain, sightlines, traces, interviews) could materially change the evidentiary picture.

The numbers are not static snapshots. Since 2016 GEIPAN uses “dynamic” statistics calculated from data related to classified cases published on its website as described on its statistics page. Read that as a living ledger: as more cases are published, updated, or reclassified based on additional information, the distribution adjusts. The archive is a case management system exposed to the public, not a one-time report that never changes.

The fastest way to understand the archive is to recognize recurring report shapes. These profiles do not imply a single cause; they show why classification and documentation discipline matter.

- Lights at distance: A witness sees a stationary or slowly moving light over a horizon line, often at night. These files live or die on timestamps, direction of view, and whether the report includes reference points. The best dossiers include sketches that pin the light to a bearing and elevation.

- Close-range reports: A short-duration event at low apparent altitude, sometimes described as structured. These dossiers tend to include more detailed narratives and, when investigators engaged, a stronger reconstruction of sightlines and distances.

- Physical traces: A report that includes ground marks, vegetation effects, or other alleged trace elements. When these appear, the file often shifts from “what was seen” to “what was left,” and the decisive question becomes chain of custody and documentation quality rather than spectacle.

- Multi-witness events: Multiple observers reporting the same phenomenon from different locations. The advantage is cross-checking consistency; the drawback is that correlated misperception is still possible if everyone is reacting to the same distant stimulus.

In the GEIPAN archive, “unidentified” is an investigative status: a case where the available material did not support an identification under the program’s classification logic. It is not a declaration about non-human intelligence. The disciplined way to use the archive is to treat the PAN label as an index, then verify the case strength by reading the underlying witness accounts, sketches, photos, and investigation summary that earned that label.

Transparency without a smoking gun

Once you’ve seen how uneven case inputs can be, the limits of what publication can prove become easier to state precisely. Publishing case files is only meaningful when the reader can see how claims were handled, not just what someone reported. GEIPAN’s public role includes collecting, analyzing, investigating, publishing, and archiving reports, and CNES positions that work as public information as well as analysis. That combination makes the archive unusually credible as a record of what was reported and what was done with it, not as a machine that produces definitive identifications on demand.

That distinction matters for expectations. Openness cuts against the simplest “they have everything and hide it” cover-up story, because it exposes the mundane mechanics of triage, follow-up, and documentation. Openness does not equal “alien disclosure,” and it does not convert unresolved files into confirmed non-human intelligence.

GEIPAN describes its investigations as using a reproducible, science-based methodology for each survey. That wording signals an operational standard: cases are supposed to be worked with the same core approach rather than reinvented per investigator or per headline cycle.

GEIPAN’s published methodology also includes cognitive interviews and on-site investigations. Cognitive interviewing is where reliability gets pressured: investigators try to lock down sequence, vantage point, timing, and perceptual context instead of letting a narrative harden into a story. On-site work is where geometry and environment stop being abstract, because distances, sightlines, and sources of confusion can be tested against the location itself. See GEIPAN’s methods documentation for more on investigative practice and PAN definitions.

GEIPAN also uses IPACO® in its investigations. Used properly, forensic image analysis does the opposite of “enhancing” mystery; it constrains what a photo or video can plausibly represent by forcing interpretations to match pixels, optics, compression artifacts, and perspective.

Even disciplined methodology runs into a hard ceiling: most sightings are not instrumented events. Many reports arrive after the fact, without calibrated imagery, without verified timing, and without independent sensor data that could anchor a reconstruction. When the raw inputs are thin, a case file can document real investigative labor and still end with an outcome that is not decisive.

Witness data is also uneven by nature. A well-intentioned observer can be precise about their reaction and still be imprecise about altitude, distance, and angular speed, especially at night or with unfamiliar reference points. That variability is not a moral judgment on witnesses; it is a predictable limitation of human perception under stress, surprise, and low-information viewing conditions.

The practical consequence is straightforward: a portion of the archive will remain ambiguous even after workup, because ambiguity is sometimes the honest residue left when measurements are missing and corroboration is weak.

Transparency changes the shape of conspiracy thinking, not always its intensity. When readers can see investigative steps and primary documents, “nothing is investigated” becomes harder to sustain. But the same openness also hands motivated interpreters a different raw material: any unresolved case can be reframed as “proof,” and any redaction, omission, or dead end can be framed as intent rather than logistics.

GEIPAN’s own language is a useful corrective here. It explicitly avoids the term “UFO,” preferring PAN (aerial phenomena, unidentified) or UAP, which is a small but concrete signal of process over spectacle.

Treat the archive as two parallel records: a record of claims and a record of handling. The first tells you what was perceived and reported; the second shows whether the report was collected, analyzed, investigated, published, and archived in a way that leaves a trail you can audit.

When you read a file, keep three questions in view without turning it into a ritual checklist. First, what methodology was applied, and does the narrative reflect structured elicitation rather than free-form storytelling? Second, what corroboration exists across independent sources, meaning sources that do not share the same vantage point, incentives, or information channel? Third, what are the evidentiary gaps, meaning the specific measurements or reference data that would be required to collapse the uncertainty but are not present in the record?

If you hold that line, you get the real value of this release: not automatic answers, but a public, inspectable account of how a state-linked team moves from report to investigation to documentation, and why that path sometimes ends in “unresolved” without implying “extraterrestrial.”

France versus today’s UAP disclosure fight

Once transparency is defined in terms of auditable casework, the contrast with today’s disclosure disputes becomes structural rather than rhetorical. “Disclosure” is not a vibe; it’s an output. France’s 2007 move treated transparency as a publishing problem: put primary case files in the public’s hands so outsiders can inspect what investigators saw. The modern U.S. approach treats transparency as an institutional reporting problem: stand up offices, formalize intake, then issue periodic summaries that describe activity without routinely releasing the underlying case file material.

That output choice is the structural fork in the road. Primary files let the public audit the work. Office-led reporting asks the public to trust that the work was done correctly, even when the raw inputs stay behind the wall.

In a “publish the file” model, the public gets traceable artifacts: the narrative as originally recorded, the contemporaneous documentation, and the investigative notes tied to a specific case. The tension here is obvious: privacy protection, redactions, and the effort of sanitizing and hosting material at scale. The payoff is equally obvious: independent readers can verify whether the official conclusion matches the record.

In the U.S. “offices plus periodic reports” model, the public more often receives summaries: annual or recurring reports to Congress, definitional updates, and framing statements about process. Touchpoints people recognize, like AARO and Pentagon UAP office framing, and public moments such as the House Oversight Committee hearing and the David Grusch testimony, sit in this lane. These moments and commentators can accelerate or erode trust depending on whether the public can see primary documentation behind claims.

Summaries are efficient, but they widen the credibility gap: the public debates conclusions while lacking the inputs. That dynamic rewards rhetoric and punishes nuance.

U.S. UAP transparency efforts repeatedly ride through the National Defense Authorization Act because the NDAA is the recurring defense authorization vehicle. The first NDAA was passed in 1961. The NDAA authorizes appropriations for the Department of Defense and nuclear weapons programs, which makes it the natural legislative chassis for standing up offices, directing reporting, and setting internal compliance requirements.

The catch is that an authorization cycle is built for governance, not public archiving. It produces mandates, deadlines, and overseer-facing deliverables. That’s structurally different from publishing primary case files to the public as a default output.

The Schumer UAP Disclosure Amendment became a litmus test because it represented a rare attempt to legislate something closer to “show your work” disclosure rather than “trust our reporting” disclosure. Public reporting around the Schumer UAP Disclosure effort describes the final language as significantly revised or diluted versus earlier proposals.

That public narrative hardened for two reasons that matter at a process level. First, the Schumer UAP Disclosure Amendment originally included whistleblower protection provisions, which signaled an incentive shift toward surfacing information. Second, a revised version removed or “nixed” at least one provision, and observers described the final language as a “dramatic dilution.” Regardless of anyone’s preferred interpretation of UAP claims, those revisions taught the public a simple lesson: disclosure is negotiated, and the negotiated product is often narrower than the initial pitch.

Primary-file publishing and institutional reporting can coexist, but they need a clear rule for what “public” means. The durable standard is straightforward: demand explicit evidence-release criteria (what gets released, how fast, and with what redactions) and traceable sourcing (a report’s claims tied to identifiable, releasable case materials wherever legally possible). If a system can’t point from a public conclusion back to the underlying documentation, it’s not disclosure; it’s messaging.

How to use the archive now

That is why the French archive remains immediately useful: it lets readers practice evidence-first evaluation on real, published casework. You extract real value from the French archive by reading it the way investigators and archivists do: one case at a time, anchored to dates, locations, sources, and documents. GEIPAN is presented by CNES as a technical department within the French space agency, and as the “Group for the Study and Information of Unidentified Aerial/Aerospace Phenomena.” That framing matters because it implies documentation and traceability, not entertainment. The issue is that case files are uneven: some are rich, some are thin, and the interface details you see today can change without notice. The disciplined move is to build your own repeatable capture routine so every file becomes comparable. You can browse the official GEIPAN portal and documentation to see how files are presented on the GEIPAN site and consult the methods documentation for guidance on investigation procedures and PAN definitions.

The sources used for this article do not verify specific GEIPAN site filters, update cadence, release logs, “latest update” dates, or criteria for when a case is posted. Treat the interface as variable and keep your workflow generic.

- Browse the case listings and open a single case file end-to-end before forming an opinion.

- Download or save the case documents (format may vary) so you can re-check details later without relying on the site view.

- Record the case metadata in your notes: date, time window, location, witness count (if stated), and the originating source (police report, gendarmerie, civilian letter, etc.).

- Log any classification label exactly as written (for example, “unidentified” versus “explained”) and keep it attached to the specific document version you read.

- Extract the concrete observables the file actually supports: direction of travel, duration, angular position, weather references, photos, and stated uncertainties.

That last step is where most readers slip: they remember the story, not the measurable claims. Your notes should preserve what the document says, not what you wish it implied.

Many “UFO” cases become ordinary once you validate context that a case file cannot fully embed. Keep checks mundane and time-bound: confirm sky conditions and bright objects using astronomy context (sky maps and planetary positions); check aviation activity using flight tracking and local airfield schedules; and scan local reporting for balloons, events, fires, or power outages. The catch is confirmation bias: if you only look for exotic explanations, you will “find” them. The rule is simple: match time, place, and line-of-sight first, then reassess what remains unexplained.

Ignore prediction-driven “UFO news” and track disclosure like a records problem. Substance shows up when officials publish primary material, not just interpretations: original imagery or sensor outputs, collection parameters, chain-of-custody notes, redaction justifications, and clear statements of analytic method. Strong reporting standards look like this: documents linked in full, dates and office owners named, and claims separated from hypotheses. Weak signals are familiar: anonymous summaries, edited clips with no provenance, and “trust us” conclusions that cannot be independently checked.

Make one habit non-negotiable: every time you read a case or a headline, capture the document, the provenance (who produced it, when, and why), and the stated method used to classify it. If the next wave of 2025-2026 disclosure does not come with primary data and auditable handling, it is noise, not progress.

What France’s 2007 move still means

The disclosure benchmark that holds up over time is simple: publish primary records and publish the method used to evaluate them. France’s 2007 release mattered because it made the public-facing archive the product, not a press claim, and it let anyone audit how cases move from raw reports to structured outcomes.

What sits at the center of that archive is casework you can actually inspect: collected reports, investigative write-ups, and an explicit classification outcome attached to each file. GEIPAN’s stated mission includes collecting, analyzing, investigating, publishing, and archiving UAP reports, and its case conclusions are organized in defined categories rather than left as anecdotes.

The point is not that the archive delivers a single, definitive answer; it is that it makes the underlying record visible. Cases that remain in an “unidentified” category reflect what the available data could not settle under the program’s process, and the archive’s value is that you can see exactly why.

That discipline is continuous, not a one-off stunt. CNES carried out expert evaluations in 1978 and 1979 by 28 engineers from CNES Toulouse on reports covering the 1970-1979 period, reinforcing that institutional review and re-review has long been part of the French approach.

If modern UAP “disclosure” is going to mean more than rhetoric, the standard is the same one signaled in the introduction: documents over claims, and a traceable method behind the paperwork. Spend time with the official CNES/GEIPAN archive, insist on consistent release standards, and reward documentation over rumor.

Frequently Asked Questions

-

What did France release online in March 2007 about UFOs/UAP?

Beginning in March 2007, France started publishing its UFO/UAP case-file archives online through an official public system. The release involved primary case materials-reports, analyses, and archival records-rather than just summary statements.

-

What is GEIPAN and how is it connected to CNES?

GEIPAN is the CNES-linked group tasked with collecting, analyzing, investigating, archiving, and informing the public about unidentified aerospace phenomena. It traces continuity from a CNES study group created in 1977 (GEPAN), later known as SEPRA and then GEIPAN.

-

Why does GEIPAN use the terms PAN or UAP instead of UFO?

GEIPAN uses PAN (Phénomènes Aérospatiaux Non-identifiés) to frame cases as “unidentified” aerospace phenomena under investigation, not as a conclusion about origin. This vocabulary emphasizes documentation, classification, and casework rather than pop-culture “UFO” narratives.

-

What kinds of documents are inside the French GEIPAN UFO/UAP archive?

Typical dossiers include witness reports/questionnaires, police or gendarmerie notes, sketches/diagrams, photos (and sometimes video stills), and investigation summaries. Some files also include aviation or radar context when it was available.

-

How does GEIPAN classify PAN/UAP cases (A/B/C/D), and what changed in 2008?

GEIPAN uses PAN categories A/B/C/D to separate identified cases, uncertain cases, and remaining unidentified cases based on evidentiary sufficiency. Since 2008 it uses a more detailed scheme (A/B/C/D1/D2) using two criteria: “weirdness” and “consistency.”

-

What percentage of GEIPAN cases are explained, and how often do on-site investigations happen?

GEIPAN reports that 63.2% of cases classified A and B are explained by misidentification or perception mistakes. About 10% of cases led to on-site investigations.

-

How is France’s 2007 “publish the case files” approach different from modern U.S. UAP disclosure efforts?

France’s model puts primary case files online so the public can audit the underlying documentation and investigative handling. The article contrasts this with a U.S. pattern of office-led transparency (e.g., periodic reports) that often provides summaries without routinely releasing the underlying case-file materials.