The unresolved cases are the part that keeps the story alive. You’ll see “UFO disclosure” or “UAP disclosure” trend again, the clips recirculate, the takes get louder, and then the official record lands on the same maddening outcome: a chunk of incidents still don’t get a neat, public answer. That gap is where certainty rushes in, even when the paperwork stays careful and conditional.

That’s why a lot of today’s UFO news and UAP news still orbits the same handful of official moments. When the government talks about Unidentified Anomalous Phenomena (UAP), it’s usually describing reports that haven’t been identified yet, not a finished conclusion. And when the All-domain Anomaly Resolution Office (AARO) is the named “process” in the story, it’s a reminder that you’re watching an effort to triage, analyze, and close cases, not a guarantee that every case will be closed in public.

If you’re skeptical of “alien disclosure” framing, unresolved cases still matter because they’re a stress test for trust. Progress can be real and still feel evasive if the outcome is, “we looked, and we can’t resolve this one.” That tension is exactly what fuels the government UFO cover-up narrative, even when the documents themselves stay more cautious than the headlines.

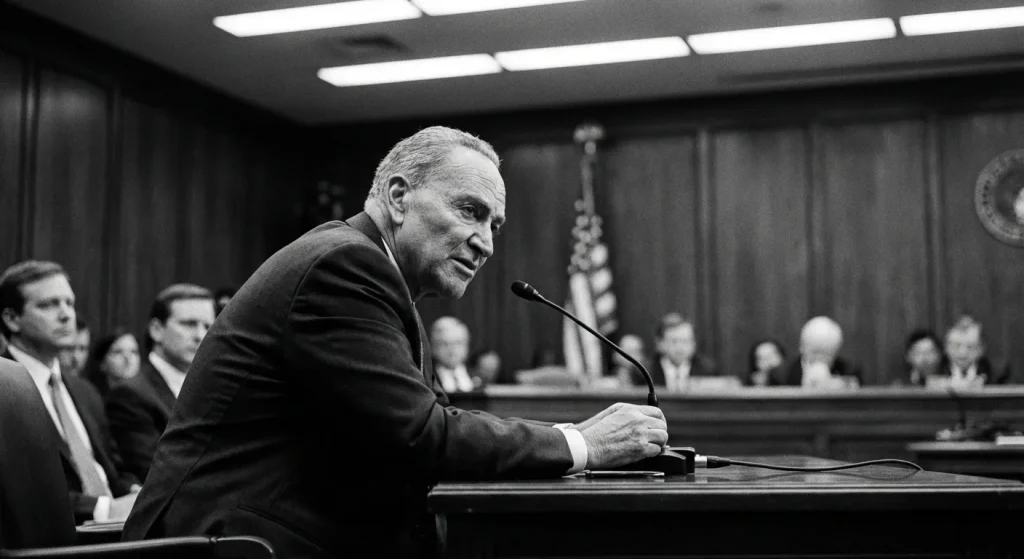

One durable reference point is Dr. Sean M. Kirkpatrick’s on-the-record Senate testimony: Wednesday, April 19, 2023, at a Senate Armed Services Committee Subcommittee on Emerging Threats and Capabilities hearing. See the committee’s hearing notice and official record for that April 19, 2023 Subcommittee hearing for the primary source and video/transcript references: Senate Armed Services Committee – Subcommittee hearing, April 19, 2023.

The other anchor is the ODNI/DoD consolidated annual UAP report dated October 17, 2023; the unclassified version is available as an official PDF from the offices that released it: Unclassified Report on Unidentified Anomalous Phenomena (Oct. 17, 2023).

You’ll walk away able to evaluate new claims against those anchors and spot what “resolution” would actually look like in the official record.

AARO’s mandate and Senate expectations

AARO isn’t built to “prove aliens”; it’s built to close identification gaps. That’s why the April 2023 Senate session wasn’t disclosure theater. It was oversight pressure meeting an office designed to standardize how reports get collected, worked, and briefed. The mandate sets hard limits on what AARO can credibly deliver in public: disciplined process, case disposition where evidence supports it, and clear statements about what data is missing when it can’t close a file.

The job in practice looks like a pipeline. Reports come in from operators and sensors, then get triaged so the highest-risk cases (aviation safety, potential adversary tech, sensitive airspace) move first. Analysts correlate tracks, imagery, and contextual intel, then coordinate across DoD and the Intelligence Community to chase explanations that sit outside one service’s view. The output isn’t a vibe check; it’s reporting with evidentiary thresholds: what was observed, what was ruled out, what remains unresolved, and why. When Kirkpatrick talks about cases resolving into mundane categories like balloons, airborne trash, or birds, that’s the office doing exactly what it was chartered to do: reduce unknowns with defensible attribution.

Congress didn’t jump straight to AARO by accident. The lineage matters: UAPTF led to AOIMSG, which led to AARO, formally established by DoD in 2022 through department announcements and implementing guidance. That evolution signals two priorities: continuity (cases and data don’t reset with each rebrand) and centralization (one intake and analytic hub instead of fragmented, stovepiped handling). The point is reporting discipline: fewer orphaned incidents, more consistent standards, and a clearer chain for accountability.

All-domain means the office has to deal with air, sea, undersea, space, and transmedium incidents under one roof. That scope is powerful, but it also guarantees friction: the best data is often classified, sensors are optimized for different environments, and a “single event” can span domains. So oversight is as much about process transparency as it is about headline answers.

The forum was an open hearing and briefing to the Senate Armed Services Committee’s Subcommittee on Emerging Threats and Capabilities, with Kirkpatrick appearing on April 19, 2023 as the sole witness. An open setting signals expectations: explain the machinery, not just the mysteries. In that lane, senators typically push for measurable accountability, such as how many reports came in, how quickly they were triaged, how many were closed with high confidence, and what persistent intelligence gaps are blocking resolution.

Use a simple rule: treat AARO updates as process plus evidence, not a yes-or-no referendum on “alien disclosure.” If a statement names the data sources, describes correlation work, and explains why a case is closed or still open, it’s doing its job. If it can’t go further publicly, the meaningful question is which evidentiary pieces (sensor access, classification, cross-agency sharing) are still missing, because that’s where oversight pressure is aimed.

That framing matters because it sets the yardstick for everything else people argue about from the hearing: not what you wish the record said, but what AARO says it can support with data.

Kirkpatrick’s core claims under oath

Kirkpatrick’s Senate testimony read less like a “reveal” and more like a standards memo delivered under oath: what counts as strong data, what doesn’t, and how AARO talks about the uncertainty that remains after you apply disciplined attribution. That framing is exactly where a lot of online takes go wrong. People want a binary answer. The testimony lives in evidence quality, corroboration, and careful language about what’s known versus what’s merely alleged.

On outcomes, the thrust of what’s attributed to his testimony is simple: many reports can be identified as ordinary things once AARO works them with enough context. The list of “normal” answers is not exotic: balloons, airborne trash, and birds. Historically, folklore explanations such as “swamp gas” have appeared in public discussion of anomalous sightings, but that phrase should not be attributed to Kirkpatrick without a verbatim transcript line.

Other media reporting of the same basic point broadens that mundane picture (including drones and aircraft) and notes that a lot of military UAP reporting in practice gets discussed in the context of foreign spying or everyday clutter rather than anything extraordinary.

And the taxonomy AARO and ODNI used around that period explicitly contemplated prosaic buckets such as space junk and climate or atmospheric idiosyncrasies, alongside classified categories. That’s a clue to how the office was thinking: categorize first, then argue from evidence, not vibes.

The most viral claims about what Kirkpatrick “said” usually hinge on evidence thresholds: how much sensor fidelity is enough, what kind of corroboration matters, and what AARO will and will not treat as persuasive. The problem is that the official transcript and hearing video are the sources you should consult for verbatim standards; they are not embedded in this article, so I am not putting precise evidentiary thresholds into his mouth here without quoting the record directly.

Practically, that’s the sourcing line you should adopt too: if someone’s takeaway depends on a precise evidentiary rule, you quote it verbatim from the official record or you don’t quote it at all.

Two topics get routinely inflated online: “non-human intelligence” and allegations of secret crash retrieval or reverse-engineering programs. The instruction for this section requires anchoring any “non-human intelligence” mention to verbatim transcript lines, and the available public record cited here does not include those official lines. So I am not launderingsocial clips into an on-the-record claim.

What is supported here is narrower: Kirkpatrick testified that AARO investigated allegations about UAP crash retrieval or reverse-engineering programs. If you want to repeat anything stronger than that, you need the official transcript passage, because this is exactly where people slide from “AARO looked into allegations” into “AARO confirmed a program,” which is a different claim with a different burden of proof.

- Pull the primary source first. If your post includes an extraordinary claim, it needs the official transcript line or the official hearing video timestamp, not a repost.

- Quote verbatim for big claims. Don’t paraphrase anything that changes the burden of proof (especially around attribution, adversary tech, or non-human claims).

- Paraphrase lightly for mundane summaries. “Many cases resolve to balloons, trash, or birds” is fine when you can point back to the official record (transcript/video) you’re using.

- Ask one habit-forming question. “Where’s the transcript line, and what evidence threshold is being claimed?” If you can’t answer both, don’t share it as fact.

Once you accept that the testimony is mostly about evidentiary standards, the next question becomes obvious: what, exactly, keeps some cases from meeting those standards?

What “anomalous” meant in practice

“Unresolved” is usually a data problem before it’s a theory problem-and that’s exactly why the ‘anomalous’ bucket persists. Even with serious analysts and real sensor feeds, a case can stall simply because the record is thin, the context is missing, or the best details live behind a wall the wider community can’t see.

In practice, anomalous (as used by AARO/ODNI) functions less like a verdict and more like a filing category: the observation can’t be confidently attributed to a known object with the information available, so it stays unresolved until better data shows up. That “until better data shows up” part is doing most of the work.

You can see the operational intent in the way many cases do move out of “unknown” once there’s enough detail to match them to ordinary things. When the same pipeline can explain a lot of reports with mundane IDs, the remaining “anomalous” set is often the residue of incomplete evidence, not a separate physics category.

The fastest way for a report to become sticky is low-fidelity data plus missing context. If you don’t have time, location, altitude, the observing platform’s state, and the full sensor settings, analysts lose the ability to reconstruct what the sensor was actually “seeing” and what the geometry allowed. A dramatic clip without metadata is entertainment, not an investigation file.

Multi-sensor corroboration, meaning confirmation across independent sensors that don’t share the same failure modes, is the difference between “we detected something” and “we measured something.” Single-sensor reports are inherently fragile: one radar track, one infrared blob, one eyewitness account. Without a second modality to cross-check, you can’t separate a real target from a bad read, a processing quirk, or a mis-modeled environment.

That’s where sensor artifact problems show up. A sensor artifact is a false feature introduced by hardware limits, software processing, display scaling, compression, or operator interpretation. If you only see the post-processed output, especially a cropped video or a screenshot of a scope, you’re often evaluating the sensor’s user interface choices rather than the underlying signal.

Geometry creates its own trapdoors. Parallax and observer effects can make a slow object look fast, make a distant object look close, or make a steady object look like it’s maneuvering. When you combine a moving observer (aircraft, ship, satellite) with unknown range, your brain fills in speed and acceleration that the data never actually measured. Unresolved cases love this gap because it produces confident narratives from ambiguous measurements.

Electronic warfare is another documented consideration that investigators have to keep on the table. Spoofing and jamming can create convincing “targets” or degrade the data stream so badly that you can’t discriminate signal from noise. Mentioning that doesn’t imply an adversary did it in any given case; it just acknowledges that modern battle spaces include deliberate deception as a design feature.

Finally, there’s the classification barrier: cases where the most informative details can’t be broadly shared for sources-and-methods reasons. Even when analysts have access, classification can block wider verification, peer review, and outside reanalysis, which slows resolution and keeps the public-facing record stuck at “unresolved” even if someone, somewhere, has a stronger view.

Two bad shortcuts show up online: “unresolved therefore aliens” and “unresolved therefore nothing.” Both miss what makes these cases worth tracking. For aviation safety, an unknown object near training ranges and flight paths is a hazard regardless of origin. For intelligence, persistent unknowns can expose sensor coverage gaps and vulnerability to spoofing. And for public trust, a system that can explain many reports but leaves a remainder unexplained needs to show its work clearly, or the vacuum gets filled by rumor.

The base-rate lesson is simple: when investigators can match reports to mundane categories, you should assume the unresolved tail will continue to shrink as data improves. “Anomalous” is the prompt to demand better collection and cleaner context, not a conclusion about exotic technology.

- Ask for corroboration: Do you have multi-sensor corroboration, or is it a single feed (one camera, one radar, one witness)?

- Demand metadata: Time, location, altitude, heading, speed, sensor mode, zoom level, and environmental conditions change everything.

- Check chain-of-custody: Is this the original file, or a repost, crop, screen recording, or compressed copy?

- Separate raw from processed: Can anyone reanalyze the underlying data, or are you stuck with a display artifact and no audit trail?

- Account for geometry: Do you know range, observer motion, and viewing angles well enough to rule out parallax?

- Consider deception without leaping: Is spoofing or jamming plausible in the environment, and does the data quality support or reject that?

- Note access limits: Is a classification barrier likely preventing the key details from being shared or independently checked?

Those mechanics explain why an incident can stay officially “unresolved.” But in 2023, the debate didn’t stay technical for long, because unresolved cases also became a proxy fight over who inside the system was being heard.

Whistleblowers and congressional pushback

Once whistleblowers entered the picture, “unresolved” stopped being a technical word and became a political accusation. The same unresolved gap AARO frames as a data and attribution problem, not enough information to identify an object, was reinterpreted in Washington as a credibility and trust problem: Are the right people reporting what they know, are they safe doing it, and is Congress getting the full story?

Kirkpatrick’s April 2023 testimony sat right at that hinge point. By the time the 2023 to 2024 disclosure wave picked up speed, the public argument wasn’t just “what are these cases,” it was “who’s telling the truth about what exists behind classified walls.”

David Grusch’s public claims surfaced in June 2023 through media interviews, framing his account as secondhand information he said he received from other officials about an alleged, highly restricted UAP retrieval and storage effort. That media context mattered because it moved the debate from abstract “unresolved sightings” to a specific allegation about government programs.

Then Grusch appeared under oath at a House Oversight and Accountability subcommittee hearing on July 26, 2023, a record that anchors what he actually said in a sworn, on-the-record setting. In that public hearing context, he alleged “non-human” recovery, while also drawing a clear boundary around what he said he could only provide in classified settings. That combination, headline-level claims paired with limited public documentation, is exactly why the conversation turned into a trust fight. Credible-sounding claims can coexist with thin public evidence when the claimed details sit behind classification rules.

Pushback didn’t require Congress to “pick a side” on aliens. It showed up as oversight pressure: lawmakers questioning whether AARO was receiving full cooperation across the bureaucracy, whether existing reporting channels were trusted by potential witnesses, and whether Congress had enough leverage to force information into authorized oversight lanes.

One concrete pressure valve was statutory: the FY2023 NDAA created UAP whistleblower protections (Section 1673), codified at 50 U.S.C. § 3373b. In plain terms, it’s designed to let personnel report UAP-related information through authorized channels, with anti-retaliation protections, so disclosures can move into inspectors general and appropriate congressional oversight without forcing people to “go public” to be heard.

- Anchor your understanding in what’s sworn and transcribed, meaning hearing records and written statements, not paraphrases.

- Separate allegations (what someone claims) from verification (documents, firsthand corroboration, or findings acknowledged by inspectors general).

- Track whether key steps are happening inside the system, IG processes initiated, classified briefings conducted, and those briefings acknowledged on the record.

- Distrust unsourced certainty in either direction. “It’s all true” and “it’s all fake” are both red flags when the public evidence is incomplete by design.

Once Congress starts treating the issue as a trust-and-access problem, the next question is predictable: what mechanisms actually force records to surface, get reviewed, and (when possible) get released?

Disclosure laws and transparency mechanisms

If you want real UAP transparency, watch the paperwork, not the podcasts. When unresolved cases persist, Congress’s main lever isn’t “answers on TV”, it’s process: who has to report what, how records get handled, and what declassification pathways exist when the public asks, “Show us what you found.” The friction is that you can have real investigations and still get very little public detail, because classification rules protect sources and methods even when the underlying event is mundane. The only durable way to change the output is to change the inputs and the rules that govern them.

In NDAA language, Congress can force a steady cadence of reporting, standardize how data is collected, and reduce “we can’t find it” excuses by tightening record retention and search requirements. In practical terms, that’s how you get fewer one-off briefings and more repeatable artifacts you can compare year over year: consistent public reports, consistent classified annexes, and consistent definitions for what gets logged and escalated.

One of the most concrete transparency levers described in enacted reporting language is classification governance itself: the NDAA requires AARO to account for all security classification guides that govern UAP-related reporting and investigations. That matters because classification guides determine what a briefer is even allowed to say in an unclassified setting, what can be shared with state partners, and what can be released after a declassification review. If those guides are overly broad, the public record stays thin even when Congress is getting briefed in depth.

The Schumer-Rounds UAP Disclosure Act concept, as proposed, aimed to build a structured records-disclosure process with review mechanisms, so UAP-related records would be identified, queued for review, and either released or formally withheld under defined standards. The point wasn’t “instant disclosure”, it was forcing a predictable pipeline: collect the records, review them, publish what can be published, and document what cannot.

But proposals and enacted text are different products. Negotiations can strip, narrow, or replace entire mechanisms. So treat any description of a “disclosure framework” as a hypothesis until you check what actually made it into the final FY2024 NDAA’s enacted text.

- Read the enacted statutory text that governs AARO reporting, not just press releases or summaries.

- Compare the annual unclassified reports year over year and look for stable categories, clearer sourcing notes, and consistent accounting of totals versus “unknowns.”

- Track whether updated security classification guides are acknowledged and inventoried, because that is the bottleneck that decides how much detail can safely move into public view.

- Watch for explicit mentions of declassification review activity, released data sets, or formal “cannot release” determinations. Those are signals of a working transparency pipeline, not just messaging.

That’s the framework. The day-to-day reality is still messier, so the most useful question for the next couple of years is whether AARO’s process produces better inputs (data) and better outputs (public-facing clarity).

What to watch in 2025 and 2026

The next two years won’t be decided by one dramatic “UFO sightings 2025/2026” clip. They’ll be decided by whether data quality and process transparency improve. The real test is whether the evidence and the process get sharper.

To keep the scale straight, calibrate your expectations against volume, not vibes: DoD’s All-domain Anomaly Resolution Office (AARO) has stated it received more than 1,600 reports as of June 1, 2024. See AARO’s public update for the official phrasing and source: AARO public statement on reports received (as of June 1, 2024). Treat that number as context for triage and throughput, not as proof of any single explanation.

Virality can spike while evidentiary standards stay flat, so watch for boring-but-decisive process moves: documented methodology you can quote, tighter language about what data was reviewed, and declassification releases that ship with context and metadata instead of just a highlight reel. Even in unclassified AARO/ODNI-style reporting, the real tell is whether they show their work when they sort cases into concrete buckets.

If you’re trying to track reporting cadence, don’t rely on secondhand summaries. Go straight to the controlling statute in the relevant NDAA and authorizing language and confirm what’s mandated as unclassified public reporting versus what is reserved for classified briefings or annexes. If a claim about “due dates” doesn’t cite the actual section that creates the obligation, treat it as commentary, not a schedule.

What counts as a genuine upgrade in 2025 to 2026 is straightforward: multi-sensor corroboration (for example, radar plus EO/IR), a documented chain of custody for the raw files, clear retention and accessibility rules (who can retrieve originals later), and a path for independent replication or analysis (even if the public only gets redacted subsets).

AARO has said it identified many investigated reports as ordinary objects or misidentifications. A new clip only matters if it comes with the unsexy paperwork: timestamps, sensor mode, platform details, and provenance. Anonymous claims without primary data are just claims.

- Ask: Is there multi-sensor confirmation, or only one video?

- Verify: Is the original file referenced, not a screen recording?

- Demand: Is chain of custody described from collection to analysis?

- Check: Is metadata present (time, location, sensor settings)?

- Look: Is there a retention/access path for later review?

- Prefer: Named, accountable sourcing over anonymous leaks.

Conclusion

Kirkpatrick’s 2023 testimony remains a pivot point because it put official standards on the record, yet the unresolved residue keeps the controversy alive.

The April 19, 2023 hearing is still the on-the-record anchor: it’s where the expectations for what “counts” as a serious case were stated in a setting built for oversight. The recurring public baseline is the ODNI/DoD consolidated annual UAP report dated Oct 17, 2023, “Unclassified Report on Unidentified Anomalous Phenomena” (Oct. 17, 2023), which you can stack against later editions to see what actually changes versus what just cycles in headlines. The same stress test for trust from the start of this conversation still applies: AARO reports progress and many prosaic resolutions, yet a residual unresolved set persists largely due to data and visibility constraints, and Congress keeps pressure on through reporting mandates and other oversight tools.

If you do three things, you’ll stay grounded: bookmark the official Senate hearing record (transcript and video) for exact language; bookmark the ODNI/DoD annual reports and track trendlines across years; and keep the enacted NDAA statutory text handy so you can match agency deliverables to the mandates. Treat new claims as meaningful when they come with multi-sensor data, a clear chain-of-custody, and a real declassification pathway.

Keep the debate evidence-led, and you’ll be able to sort solid disclosure signals from noise as 2025 and 2026 UFO news keeps coming; if you want, keep following this blog for updates.

Frequently Asked Questions

-

What is AARO and what is it supposed to do with UAP reports?

AARO (the All-domain Anomaly Resolution Office) is designed to standardize intake, triage, analysis, and case closure for Unidentified Anomalous Phenomena (UAP) reports. The article says its mandate is to close identification gaps and report what was observed, what was ruled out, and why a case is still unresolved when data is missing.

-

When was Dr. Sean Kirkpatrick’s Senate testimony on UAP, and which committee held the hearing?

The testimony took place on Wednesday, April 19, 2023 at 11:08 a.m. in Room SR-232A of the Russell Senate Office Building in Washington, D.C. It was an open hearing and briefing for the Senate Armed Services Committee’s Subcommittee on Emerging Threats and Capabilities, with Kirkpatrick as the sole witness.

-

What did Kirkpatrick say many UAP cases were actually identified as?

The article states that many cases were resolved into mundane categories like balloons, airborne trash, birds, and “swamp gas.” It also notes other summaries that include drones and aircraft as common explanations.

-

What does “anomalous” or “unresolved” mean in AARO/ODNI reporting?

“Anomalous” functions as a filing category for observations that can’t be confidently attributed to a known object with the information available. The article emphasizes that unresolved cases are usually a data-quality and missing-context problem rather than a conclusion about exotic technology.

-

What specific data or “specs” does AARO need to resolve a UAP case?

The article lists key metadata such as time, location, altitude, heading, speed, sensor mode, zoom level, and environmental conditions. It also highlights the need for multi-sensor corroboration (e.g., radar plus EO/IR) and a documented chain of custody for the original raw files.

-

How many UAP cases has AARO reviewed, according to the article?

The article says AARO reported reviewing 1,600+ cases as of June 1, 2024. It frames that number as context for triage and throughput rather than proof of any single explanation.

-

What should I look for to judge whether a new “UFO disclosure” claim is credible?

The article says to anchor claims in primary sources like the official hearing transcript/video and the ODNI/DoD annual UAP report dated October 17, 2023 (available on DNI.gov, Defense.gov, or AARO.mil). It recommends treating new claims as meaningful only when they come with multi-sensor data, full metadata, and a clear chain-of-custody rather than cropped clips or secondhand summaries.