The story of official UAP investigation did not begin in the U.S. Congress. If you follow recent spikes in UFO-sightings coverage, hearing clips, and leak-driven UAP news, most coverage still begins in Washington and treats everything else as an afterthought. That makes it harder to tell which “UFO disclosure” claims are backed by institutional capability and which are just narrative momentum.

You are not missing some secret document. You are running into a reporting gap: the difference between public controversy and the slow, procedural work of building an office that can collect reports, protect sensitive data, and publish what can be verified. That gap is exactly where “government UFO cover-up” narratives thrive, because the public sees conclusions withheld without seeing the process that forces some withholding.

Here is the tension this piece resolves: transparency and public accountability versus operational security and intelligence equities. The public wants a verdict. Governments build an investigation pipeline, because UAP (unidentified anomalous phenomena) is not a conclusion, it is an observation or event whose cause cannot be identified from the available data at the time, which is precisely why formal triage, collection standards, and reporting rules matter. UFO (unidentified flying object) is the older umbrella term that still dominates media shorthand, even when the institutional conversation has shifted toward broader “all-domain” anomalies.

The timeline most readers never get is the institutional one. Peru’s Air Force-backed office is widely cited as standing up an official military capability to address unidentified aerial phenomena in December 2001, long before U.S. efforts became mainstream UAP coverage. The boundary of what is documented in the research provided here is equally clear: this section does not have the exact Peruvian legal instrument, decree number, or full Spanish title from an official gazette, only the repeated reporting that such a department was created in late 2001.

In the United States, the All-Domain Anomaly Resolution Office (AARO) was announced by the Department of Defense in 2022. The Department of Defense published a press release announcing establishment on July 20, 2022 (DoD release). Separately, Congress later set statutory timing and requirements: the FY2023 National Defense Authorization Act directed the Secretary of Defense, in coordination with the Director of National Intelligence, to establish or designate an AARO within 120 days after enactment (120 days after December 23, 2022) and codified authorities and reporting obligations (FY2023 NDAA text). Subsequent congressional hearings and oversight commentary have focused on disclosure, process, and whether the office’s outputs meet statutory expectations.

You will leave with a standard for judging “UAP disclosure” claims: demand a documented mandate, a defined reporting chain, and auditable public outputs, because an office is only as credible as the process it can prove it runs.

Why Peru acted in 2001

The gaps and muddled timelines in early UAP office histories make more sense once you anchor Peru’s 2001 decision in operations, not folklore. In an airspace used by traffickers and watched under real interdiction authorities, an intake and investigation capability for unidentified aerial reports functions like a security instrument: it helps commanders sort credible airspace problems from noise before that noise becomes an incident.

The practical driver was ambiguity under pressure. Peru was operating in a counter-trafficking environment where the government had adopted legal and operational measures authorizing interdiction of suspected drug-trafficking aircraft. U.S. reporting links increased U.S. assistance in 1993 to Peru’s implementation of Decree Law Number 25426, and U.S. and Peruvian documents describe Air Bridge Denial and interdiction procedures used against aircraft suspected of drug trafficking (State Dept. report) (CIA, ABD procedures). Against that background, the Peruvian Air Force placed the average number of international trafficking aircraft at roughly 270 in its assessments during the period (CIA ABD procedures) (State Dept.).

Sovereignty is not an abstract talking point when rules allow force and neighboring states are actively destroying drug-trafficking planes in their own airspace. That posture raises the cost of getting it wrong in both directions: treating something mundane as hostile can trigger escalation, while dismissing a real penetrator can mean another load crosses unchallenged. The operational requirement is disciplined classification under rules of engagement, not a debate about what people “saw.”

The intelligence layer complicates it further. External surveillance and cueing can improve interdiction, but it also injects uncertainty. U.S. aerial tracking assets detected narcotics trafficking aircraft and provided information used to follow and intercept them, and U.S. surveillance also identified apparently legitimate flights, including a missionary plane, as possible drug-smuggling flights. Those are classic signal-versus-noise conditions: the same sensors and analyst judgments that find illicit traffic can also generate false positives that push decision-makers toward the wrong target (NSArchive summary) (CIA ABD procedures).

“Official” in this article means an institutional unit tied to the Peruvian Air Force with a mandate to receive, evaluate, and archive reports so they can be compared against other airspace data over time. That matters because public reporting pressure is real, and it does not arrive neatly pre-filtered. Some later news coverage around Peru’s revived effort in 2013 described prior closures or reorganizations of MoD/UAF reporting efforts; contemporary reporting about a revived Peruvian Air Force unit cited earlier activity but did not include primary Decree or gazette documents in the available sources (The Guardian)(Phys.org). The provided research did not surface an official Ministry of Defence record explicitly documenting a prior hotline and its formal shutdown.

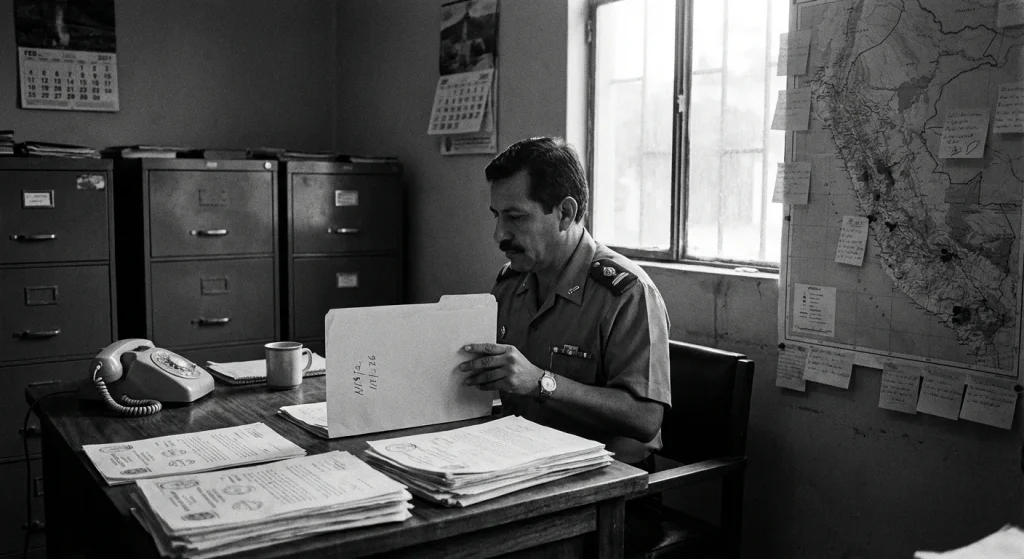

The seriousness of Peru’s airspace problem shows up in who gets formally tasked. The Government of Peru designated Peruvian Air Force Major General Jorge Kisic Wagner, Commander of Operations, as the team leader, a signal that senior operational command was engaged on airspace and security decision-making rather than treating aerial reports as a public-relations curiosity.

The right way to judge any such office is straightforward: does it improve decision-quality and safety in a high-stakes air picture shaped by interdiction authorities, procedural constraints, and hundreds of trafficking flights? If it reduces false positives, preserves comparable records, and helps prioritize what deserves aircraft, radar time, and command attention, it is doing its job.

That operational logic explains why Peru would want a standing capability. The harder question is what, precisely, the office was authorized to do and what procedures it followed-because those details determine whether “official” means more than a name.

OIFAA mandate and workflow

Serious UAP investigation is an operational workflow, not an opinion. A standing military office earns credibility through repeatable procedure; without that, “UAP news” collapses into anecdotes. None of the provided sources contain OIFAA’s founding or charter documents or any statement about OIFAA’s official mandate or scope. So what follows is a typical operational model for a standing capability, not confirmed OIFAA specifics.

Ad hoc handling fails for a predictable reason: volume and inconsistency. The earlier hotline-and-response model illustrates how quickly an intake channel becomes unmanageable when it is not backed by disciplined filtering, documentation standards, and evidence controls.

A second failure mode is informal compilation. In Peru, one aerospace-history institute was preparing and publishing sighting books assembled from clippings and reports, which preserves cultural history but does not produce an auditable investigative record. A standing office exists to do the opposite: separate safety and security signals from background noise, then preserve the underlying data so conclusions can be revisited.

This is where aviation-style investigative principles become useful. The relevant ICAO document in the provided research is presented as an unedited advance version of an ICAO publication, approved in principle by the ICAO Secretary General and made available on Skybrary. That framing supports a safety-oriented mindset: disciplined collection, corroboration, and documentation, without over-claiming any specific ICAO mandate for UAP.

A typical standing model runs as a controlled pipeline. The friction is that every stage has to function even when witnesses are stressed, sensors are missing, or the event touches classified capabilities.

- Intake: Accept reports through controlled channels (operations centers, unit reporting, aviation safety systems). The practical deliverable is a standardized case record with time, location, unit, mission context, and any known sensor sources, created immediately while memories and logs are fresh.

- Triage: Perform rapid initial sorting to prioritize reports based on safety, security, and data quality. This is where a standing office earns its keep, because it prevents scarce analytic time from being consumed by low-information narratives while elevating events with collision risk, airspace intrusion, or strong multi-sensor data.

- Interview and data capture: Conduct structured interviews and capture raw artifacts: radio calls, kneeboard notes, cockpit video, track data exports, maintenance logs, weather products, and duty rosters. The non-obvious constraint is that good interviewing is not casual conversation; it is a repeatable script designed to reduce contamination from leading questions and post-event rumor.

- Corroboration and sensor checks: Validate the report against independent sources: ATC recordings, radar and ADS-B where applicable, electro-optical and infrared logs, electronic support measures, and meteorology. Missing data is common; a standing office documents absence explicitly so later reviewers can see whether the gap was technical, procedural, or classification-driven.

- Analytic write-up: Produce a structured assessment that separates observations (what was seen or recorded) from inferences (what it might be). The output is a short, citable product with a confidence statement grounded in data completeness, not in personality-driven certainty.

- Archival and classification handling: Store the complete case file with access controls, retention rules, and searchable metadata. This is where a standing capability stops being a news cycle and becomes an institutional record.

Credible investigation requires evidence integrity, not just conclusions. The core mechanism is chain of custody: a documented record of how evidence was collected, handled, stored, and transferred. Without it, even high-quality sensor data becomes operationally useless because nobody can prove what changed hands, when, and under what controls.

A standing office also needs institutional memory: a consistent case format that lets analysts compare across years and identify repeating patterns. Reporting a “first officially documented” case, as DIFAA was described as doing, matters less for headlines than for the precedent it sets: a documented case is reviewable, teachable, and auditable.

Public access is often limited because the file may contain classified sources and methods, unit locations, or sensor performance details. In U.S. practice described in NARA and OGIS materials, OGIS is charged by FOIA to review agencies’ FOIA policies, procedures, and compliance, and agencies prefer that requesters seeking access to classified records file Freedom of Information Act (FOIA) or Mandatory Declassification Review (MDR) requests. The existence of formal pathways does not mean rapid disclosure; it means disclosure is governed by process and review.

Even outside intelligence content, personnel and military-related records often have restricted access categories. That reality shapes what a standing office can release without compromising privacy, safety, or operational security, and it reinforces why disciplined internal archiving is non-negotiable.

- Verify intake: Can the office show a standardized case record created at report time?

- Demand triage: Can it demonstrate prioritization rules tied to safety, security, and data quality?

- Audit evidence handling: Can it document chain of custody and controlled storage for raw artifacts?

- Require corroboration: Can it cite independent sensor and log checks, including explicit data gaps?

- Insist on write-ups: Can it separate observation from inference in a structured product?

- Confirm retention: Can it archive cases with metadata, access controls, and reviewability?

If an office cannot demonstrate disciplined intake, prioritization, and evidence handling, it is not an investigative capability. It is a mailbox.

What investigations can actually deliver

An office can run a disciplined investigative process and still disappoint people expecting cinematic certainty. The most important product of a UAP office is not a headline, it is disciplined uncertainty management: sorting reports into defensible categories, explaining why, and being explicit about what the evidence can and cannot support.

The friction is structural. Many reports arrive as single-witness accounts, after the fact, with no original sensor logs, no raw imagery, no time-synced metadata, and no independent corroboration. Add post-event memory contamination (people reframe what they saw after news coverage, social media threads, or leading questions) and you get a familiar investigative outcome: a case file with genuine ambiguity that cannot be responsibly forced into a tidy conclusion. Even real offices that take public reporting seriously have had to grapple with that reality over time.

Most casework ends in one of three buckets:

- Identified and resolved: A specific explanation fits the observations and the available data (time, location, direction of travel, sensor context) without special pleading.

- Insufficient data: There is not enough information to reach an identification that would survive scrutiny. This is not a mysterious conclusion, it is an evidence-quality conclusion.

- Unresolved but open: The file contains some credible elements, but the office cannot close it without better data or additional corroboration.

Unresolved does not automatically imply non-human intelligence. It means the office is refusing to invent certainty. The disciplined move is to keep the case open and state what would be required to resolve it.

Early investigative passes are dominated by routine misidentifications because perception is lossy and the sky is crowded. Common pathways show up repeatedly:

- Astronomy: Venus, bright stars, and the Moon misread as hovering lights, especially through zoomed phone video.

- Balloons and drifting objects: Slow movement plus wind shear creates apparent maneuvers when distance is misjudged.

- Drones: Navigation lights and abrupt direction changes at short range look exotic without scale cues.

- Aircraft: Landing lights, contrails at altitude, and perspective effects make normal flight profiles look anomalous.

- Sensor artifacts: Autofocus hunting, rolling shutter, compression, lens flare, and radar track quirks generate “objects” that are really instrument behavior.

Real investigations treat these as baseline hypotheses to eliminate, not as dismissive excuses.

Credibility comes from categorization, confidence levels, and documented reasoning rather than dramatic conclusions. A stated confidence level is an accountability tool: a declared degree of certainty attached to an identification or assessment based on evidence quality and corroboration, not on how compelling the story sounds. Responsible briefings show the chain of reasoning, note missing data, and avoid narrative leaps beyond what the record supports.

One hard constraint applies here: none of the provided sources contain quantitative outputs or a published breakdown of outcomes (identified vs insufficient data vs unresolved) from OIFAA, nor do they report numbers of reports received or investigated by OIFAA for specific years. Any claim implying published OIFAA outcome statistics is unsupported by the material in this article.

- Check data quality: Does the claim include original logs, metadata, and time-location specifics, or only anecdotes and cropped clips?

- Check corroboration: Is there independent confirmation across witnesses or sensors, or a single narrative?

- Check stated confidence: Does the source declare how sure it is and why, or does it jump straight to conclusions?

If a “UFO disclosure” claim lacks data quality, corroboration, and a stated confidence level, treat it as unverified.

Those limits are not abstract. They shape what any office can responsibly say in public-and they are exactly why modern disclosure debates keep returning to institutional design rather than individual cases.

OIFAA versus today’s UAP era

The disclosure era is now an institutional design problem. Modern UAP disclosure fights are no longer about whether reports exist; they are about whether institutions can ingest them at scale, triage them consistently, protect sensitive sources and methods, and still communicate conclusions in a way the public can audit. Peru’s early signal was that a state can acknowledge the reporting problem; the U.S. UAP era tests whether a statutory office can run the reporting pipeline credibly without collapsing under the weight of expectation.

AARO (All-Domain Anomaly Resolution Office) matters because its cross-domain remit is built into its design: it is a Department of Defense office, announced by DoD in 2022 (DoD release), and later reinforced through statute-level requirements in the FY2023 NDAA that define authorities, organization, and reporting (FY2023 NDAA text). That statute-level scaffolding changes incentives: leaders can no longer treat UAP work as a temporary task force that fades when attention shifts. The tradeoff is equally real: once reporting requirements exist, every gap between the mandated promise and the visible output becomes a political vulnerability.

AARO accepts reports of U.S. Government programs or activities related to UAP from current and former U.S. Government employees, service members, or contractors; eligibility and submission guidance are published on the AARO website and submission portal (AARO) and (AARO Submit-A-Report). The Department of Defense also announced a secure reporting mechanism intended for government personnel and described public outreach steps, while noting a separate channel for the general public would be announced in follow-on work (DoD reporting announcement).

That eligibility rule is an institutional statement: disclosure is not just about sightings, it is also about allegations of hidden programs, procurement, or legacy activity that would never appear in an incident log. It also has a practical consequence: the official AARO submission portal is designed for current and former U.S. Government personnel and contractors, not as an unrestricted public tip line; the AARO site and DoD announcements are the authoritative sources on who may submit and how (AARO Submit-A-Report) (DoD reporting announcement).

Once Congress makes UAP a repeat hearing topic, public expectations stop tracking the evidentiary standard used inside the system. Congressional oversight has repeatedly focused on process and disclosure, which raises the bar for AARO to show its work without turning every unanswered question into an implied admission.

Whistleblower-driven narratives intensify that pressure. David Grusch presented himself as a whistleblower and, in testimony context, alleged “nonhuman” biologics at crash sites and alleged retaliation for coming forward. Those are claims, not adjudicated findings, and “unidentified” still means unresolved, not confirmed non-human intelligence. The institutional takeaway is sharper: statutes and submission tools create a demand signal that hearings amplify, and credibility depends on whether the office can separate allegation intake from evidentiary validation without appearing to stonewall.

Use a rubric that ignores personalities and survives the next news cycle: (1) legal mandate, including who the office reports to and what deadlines exist; (2) reporting channels, including who can submit and how data is logged; (3) publication cadence, meaning what must be released and how often; and (4) evidence standards, meaning what counts as corroboration and what must remain classified. Compare disclosure regimes on those four variables, and the institutional story becomes legible.

That rubric also clarifies why early offices like Peru’s matter even when public outputs are thin: they highlight the difference between an intake function and a durable, auditable reporting system.

Blueprint for credible UAP transparency

Credible UAP transparency is operational. If disclosure cannot be measured, audited, and repeated, it will not accumulate trust across election cycles, leadership changes, or the next spike in media coverage. OIFAA’s existence is the proof-of-concept: a government can institutionalize intake instead of treating reports as ad hoc anecdotes. The gap is just as instructive: based on the available research, you cannot claim OIFAA publishes public reports or maintains a public-facing output stream, which is exactly where most transparency efforts fail in practice.

That is why modern transparency debates increasingly center on governance choices-cadence, metrics, redaction discipline, and oversight-rather than on promises of a single definitive reveal.

Minimum viable transparency is what the public gets every time, even when conclusions are scarce. Use France’s GEIPAN as the benchmark for cadence: since 2005 it has undertaken investigations and regularly issued information to the French public. The governance point is cadence, not spectacle.

Publish, at minimum: (1) intake categories (civilian, military, sensor-only, legacy report), (2) a standardized data schema for each case (time, location granularity, sensor types, confidence fields), (3) methodology at a “how we work” level (triage, prioritization criteria, evidentiary thresholds), (4) timelines (target time-to-triage, target time-to-initial update), and (5) explicit limitations (what you cannot release and why).

Disclosure is legally bounded. Public release can be denied when disclosure would invade personal privacy and that privacy interest outweighs the public interest, and even seemingly basic service-related information can be restricted without authorization. Build that constraint into the design rather than improvising it after a headline.

Write redaction rules into policy: redaction is the removal or masking of sensitive details before public release. The discipline is consistency. Define what gets redacted (identifiers, precise coordinates, collection parameters), who can approve it, and how you document what was removed without leaking the sensitive content.

Witness protections have to be operational: confidential intake paths, anti-retaliation commitments for government personnel, and a clean separation between reporting and enforcement functions when applicable.

Treat timeliness as a first-class metric. “Door to doctor” measures time from registration to first provider, and indicator frameworks enable timely, cost-effective performance assessment. Use the same logic: publish median time-to-triage and median time-to-first-public-update, plus backlog size and aging.

Independent review is non-negotiable: an inspector-general style function or an external review panel that audits sampling integrity, redaction compliance, and metric definitions. Pair that with a fixed publication cadence.

One more governance signal matters in 2025 and 2026: a Senate amendment in the 118th Congress was proposed to provide for expeditious disclosure of unidentified anomalous phenomena records. Proposed or enacted, the direction of travel is measurable speed and auditable process.

- Verify intake: Is there a standing, year-round reporting channel with a published schema?

- Check methodology: Are triage rules, limitations, and redaction standards public?

- Grade protection: Are witness privacy and retaliation risks addressed in policy and practice?

- Demand metrics: Are time-to-triage, backlog, and disposition rates published on a cadence?

- Confirm oversight: Is there independent review with authority to audit and report?

Conclusion

The most consequential part of “UAP disclosure” is not a headline claim. It is whether an institution can intake reports, investigate them with discipline, and communicate results without contaminating the evidence trail or public trust.

Peru’s OIFAA, as described in this piece, is best understood as an early example (2001) of institutionalizing UAP intake and investigation inside a military context, shaped by airspace and security incentives. The same standard applies here that applies to today’s debates: credibility turns on a documented mandate, a defined reporting chain, and auditable outputs.

Modern expectations are higher. AARO’s establishment, statutory posture, and public reporting tools signal a shift toward partial transparency and public-facing intake as the baseline, not the exception. That shift also raises the bar: an office is judged by repeatable triage, chain of custody, confidence language, and disciplined redaction, not by how sensational its cases sound.

Peru’s history also shows how fragile continuity can be: earlier reporting mechanisms did not endure, and the Peruvian Air Force’s DIFAA later recorded what it described as its first officially documented UFO case. Institutional capacity is not a vibe; it is a maintained system.

Evidence limitation, stated plainly: the provided research did not surface OIFAA charter documents or any public-facing OIFAA report outputs. None of the provided sources are identifiable as OIFAA materials, and none include an “OIFAA” string, name, or obvious OIFAA domain.

- Track official releases, not recaps: look for dated memos, declassified attachments, case lists, and formal reporting language, not just interviews.

- Demand methodology and confidence levels: credible updates state what data was collected, what was excluded, and how “unresolved” differs from “extraordinary.”

- Treat whistleblower claims as leads: prioritize documents, chain-of-custody details, and corroborable timelines over conclusions.

- Request records formally where applicable using FOIA and, for classified holdings, MDR pathways and oversight channels like OGIS for process disputes.

FOIA is the legal tool for access to agency records, MDR is commonly used for classified material, and OGIS exists to review FOIA policies and compliance. Those mechanisms won’t guarantee disclosure, but they force claims to meet standards.

Use this rule and you avoid both default errors: reflexive “cover-up” certainty and reflexive “aliens” certainty. If you want updates as new official documents and reports land, subscribe to our newsletter.

Frequently Asked Questions

-

What does UAP mean, and how is it different from UFO?

UAP (unidentified anomalous phenomena) refers to an observation or event whose cause can’t be identified from available data at the time. UFO (unidentified flying object) is the older umbrella term, while current institutional discussions often use UAP to reflect broader “all-domain” anomalies.

-

When did Peru create OIFAA, and why is it considered significant in UAP investigations?

Peru’s Air Force-backed office is widely cited as being created in December 2001, long before U.S. efforts became mainstream. The article notes the exact Peruvian legal instrument and full official title were not found in the provided research, but repeated reporting places its creation in late 2001.

-

Why did Peru launch a military UAP office in 2001?

Peru faced high-stakes airspace pressure in a counter-trafficking environment where use of force against suspected drug-trafficking aircraft was authorized under referenced “1904 law” and Peruvian Air Force procedures. The Peruvian Air Force estimated about 270 international trafficking aircraft on average, making disciplined identification and triage of unknown tracks operationally important.

-

Who led Peru’s OIFAA-related effort, according to the article?

The Government of Peru designated Peruvian Air Force Major General Jorge Kisic Wagner, Commander of Operations, as the team leader. The article presents this as a sign the effort was tied to senior operational command rather than treated as a public-relations function.

-

What are the key steps in a credible military UAP investigation workflow?

The article describes a standard pipeline: intake, triage, interview/data capture, corroboration and sensor checks, analytic write-up, and archival/classification handling. It emphasizes chain of custody for evidence integrity and a structured write-up that separates observations from inferences.

-

What outcomes do UAP investigations usually end with (identified vs unresolved)?

Most cases end in one of three buckets: identified and resolved, insufficient data, or unresolved but open. The article states unresolved does not imply non-human intelligence; it means the office can’t close the case without better data or corroboration.

-

What should I look for to judge whether a UAP disclosure claim is credible?

The article’s rubric is to demand a documented mandate, a defined reporting chain, and auditable public outputs. It also recommends checking for data quality (original logs/metadata), corroboration across sources, and a stated confidence level tied to evidence completeness.